The Implementation Gap: From Strategy to Infrastructure

Our recent search performance reports for the "SEO Workhorse" pillar indicate a significant shift in market maturity. While our initial framework established the "why," our data shows over 300 impressions for queries like "the seo workhorse process" and "workflow" without a corresponding click-through volume.This signals that senior marketers are no longer looking for theory; they are looking for the pipeline infrastructure to execute.

In 2026, the bottleneck in SEO isn't keyword research - it's data fragmentation. As search engines move toward generative responses, the only way to maintain a competitive moat is to "SQLify" your SEO, creating a unified data environment where paid intent signals and organic performance are joined in real-time.

BigQuery automation feeds into the broader cross-channel ecosystem.

The BigQuery Foundation: Why 16 Months Isn't Enough

The traditional SEO workflow relies on the Google Search Console (GSC) interface, which is limited by a 16-month data retention cap and row-level sampling. For a "Workhorse" system, this is a strategic failure. To scale, you must utilize BigQuery for three primary reasons:

- Unlimited Data Retention: Beyond the 16-month limit, allowing for year-over-year cohort analysis of intent shifts.

- No Row Limits: Every query, every click, and every impression is stored, providing a "high-fidelity" view of the long-tail keywords that traditional tools miss.

- Complex Data Joins: The ability to join GSC data with Google Analytics 4 (GA4) and CRM data to identify keywords that drive revenue, not just traffic.

Step 1: Architecting the Data Ingestion

The first step in the Workhorse workflow is establishing a daily bulk data export from GSC and GA4 into a centralized BigQuery project. This "Digital Advertising Operating System" (DAOS) ensures that your AI models are learning from a first-party data set rather than platform-level averages.

To set this up, marketers must configure a Google Cloud Project and enable the Search Console API. The beauty of this architecture is that it captures data from the day of connection forward, creating an accumulating asset that grows in value as your "Workhorse" pillar expands.

Step 2: The Logic of URL Standardization

The primary hurdle in cross-channel reporting is URL inconsistency—parameters, protocols (HTTP vs. HTTPS), and trailing slashes often prevent data sets from matching. In SQL, we solve this with a custom transform_url function.

By stripping these parameters, we ensure that a conversion on a PPC landing page (captured in GA4) can be mapped back to the specific organic query (captured in GSC) that originally identified the user's intent. This is the core of "Information Gain" harvesting: finding the queries that are already working in "Beta" PPC campaigns and immediately prioritizing them for SEO content production.

Automating "Information Gain" Harvesting

Once the pipeline is established, the "Workhorse" workflow shifts from manual analysis to automated discovery. In 2026, we utilize agentic AI workflows to monitor these BigQuery tables for specific triggers.

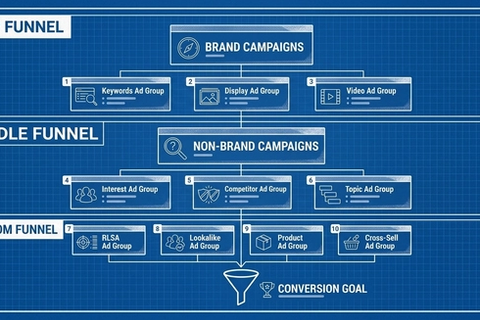

Identifying the Revenue Cluster

We run a "many-to-one" SQL join that groups GSC search queries by their landing page. The workflow is designed to flag "Leader Keywords"—terms with high conversion rates in PPC but low organic position (outside the top 10). These terms are automatically pushed to a "Content R&D" dashboard in Looker Studio.

Semantic Grouping and Intent Mapping

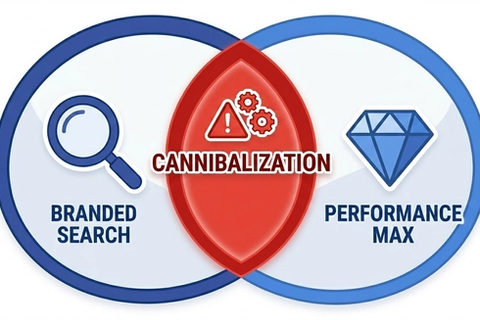

Rather than grouping keywords by how they look, the workflow uses Large Language Models (LLMs) like Gemini via Vertex AI to group queries by "SERP Similarity." If two keywords trigger overlapping results in the top 10, they belong in the same cluster. This keeps the Workhorse pillar aligned with how Google’s AI understands topics, preventing content cannibalization and signaling topical authority.

The Rise of Agentic SEO Operations

In 2026, the transition from "Assisted AI" to "Agentic AI" means that the workflow is managed by specialized digital teammates. These agents do more than follow instructions; they plan and execute multi-step tasks.

- The Campaign Intelligence Agent: Automatically connects BigQuery data across the marketing stack to surface performance anomalies and Narrative Summaries of what is working.

- The Intent Intelligence Agent: Analyzes the context behind inbound engagements to recommend the "next-best action" for the content team.

By automating these processes, a lean team can publish three times a week while maintaining the quality standards of a much larger enterprise.

Conclusion: Content as Infrastructure

The "SEO Workhorse Workflow" is more than a checklist; it is an organizational shift toward treating content as "pipeline infrastructure. "By building a real-time data pipeline in BigQuery, you eliminate the guesswork of keyword research and ensure that every organic dollar is spent on terms with a proven ability to drive sales.

The marketers who win in 2026 will be those who stop operating in silos and start architecting data-driven systems. Your Workhorse is waiting—it’s time to build the pipeline.

Strategic Takeaway: If your SEO team is still working out of spreadsheets and manual GSC exports, you are paying a "transparency tax." Automate your harvesting in BigQuery to reclaim 10–15 hours per week for high-level strategy.

Frequently Asked Questions (FAQ)

Why should I use BigQuery for SEO instead of just using the Google Search Console interface?

BigQuery removes the 16-month data retention limit and row-level sampling inherent in the GSC interface. It allows you to join organic search data with session-level revenue from GA4, identifying which specific queries are driving actual sales, not just clicks.

How does the "transform_url" function help in cross-channel reporting?

Standardizing URLs is critical because tracking parameters (like ?gclid) can cause data to fragment.[4] A custom SQL function strips these parameters, ensuring that PPC conversion data and organic search signals are joined on the same base URL for a "single source of truth".

What is "Information Gain" harvesting in the context of a data workflow?

It is the automated process of identifying high-converting keywords from your PPC "Beta" campaigns that have little to no organic visibility. By prioritizing these terms for content production, you ensure your SEO strategy is fueled by empirical revenue data rather than volume estimates.

How do agentic workflows reduce the manual hours required for SEO?

Agentic AI can independently handle multi-stage processes like data normalization, performance anomaly detection, and intent classification. This allows small teams to manage complex cross-channel pipelines that would typically require a massive operations department.